The ascendancy of AI large models, typified by the emergence of ChatGPT in 2023, has markedly heightened attention on InfiniBand technology. This heightened focus stems from the utilization of InfiniBand by GPT models, a technology developed by NVIDIA. This article aims to furnish a comprehensive understanding of InfiniBand, delving into its conceptual underpinnings and its esteemed standing within the technological landscape.

Understanding InfiniBand

InfiniBand constitutes a high-speed, low-latency interconnect technology principally deployed in data centers and high-performance computing (HPC) environments. It functions as a high-performance fabric facilitating the seamless connection of servers, storage devices, and assorted network resources within a given cluster or data center.

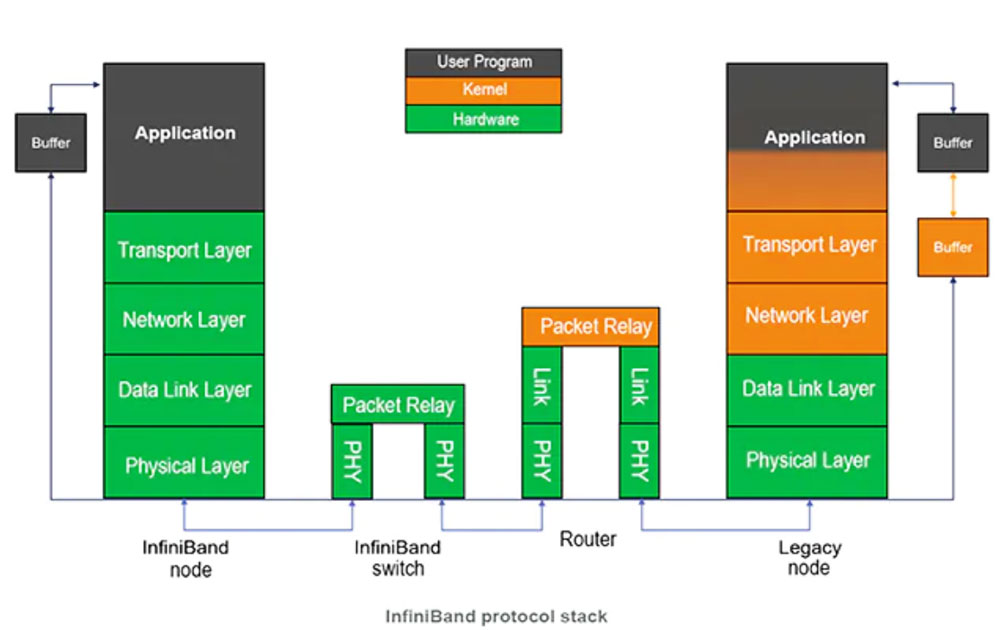

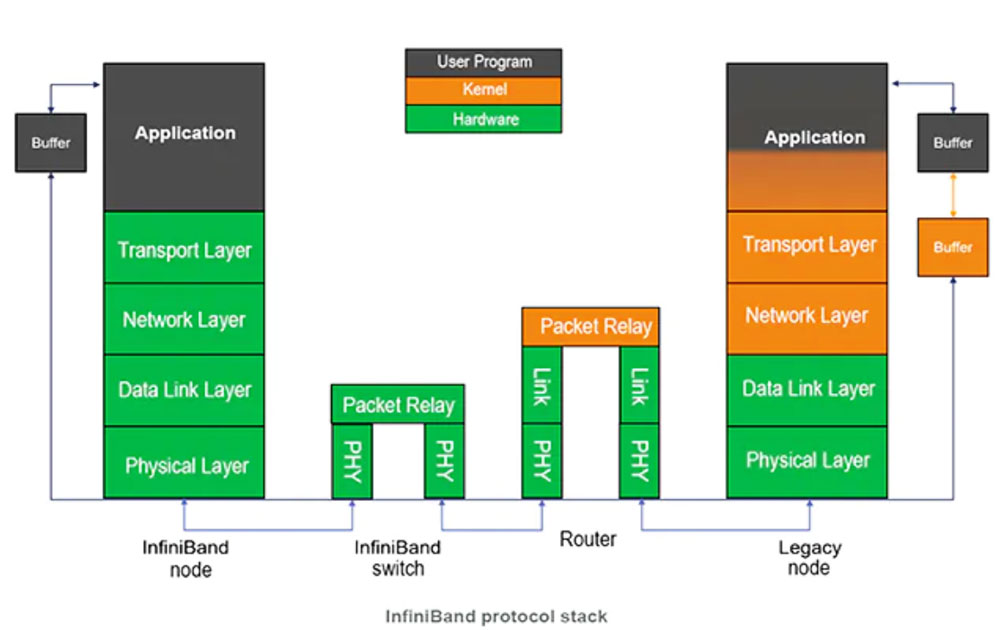

The architectural framework of InfiniBand is bifurcated into two layers, effectively segregating the physical and data link layers from the network layer. The physical layer employs high-bandwidth serial links to establish direct point-to-point connections between devices. Conversely, the data link layer manages the transmission and reception of data packets among devices. The network layer encompasses critical attributes such as virtualization, quality of service (QoS), and remote direct memory access (RDMA), collectively endowing InfiniBand with robust capabilities conducive to the demands of HPC workloads, characterized by imperatives of low latency and high bandwidth.

Salient Attributes and Advantages of InfiniBand

High Speed and Scalability: InfiniBand boasts rapid communication capabilities coupled with scalability. The InfiniBand Trade Association (IBTA) standard supports Single Data Rate (SDR) signaling at rates of 2.5Gb/s (1X) and 10Gb/s (4X), extending to a maximum of 30Gb/s (12X). Moreover, Double Data Rate (DDR) and Quad Data Rate (QDR) signaling facilitate scaling to 5Gb/s and 10Gb/s per lane, respectively, allowing for a maximum data rate of 120Gb/s over 12X cables.

Low Latency: InfiniBand distinguishes itself with notably low latency, ranging from 3-5 microseconds, and in some instances as low as 1-2 microseconds. This contrasts sharply with Ethernet latencies, typically ranging from 20-80 microseconds. InfiniBand's ability to minimize latency is pivotal for clustered computing applications, significantly influencing overall performance and the expeditious access of applications to data.

Low Power Consumption: InfiniBand technology is intricately designed to mitigate power consumption concerns, a paramount consideration for network managers. Comparatively, InfiniBand demands less power than contemporary 10Gb/s Ethernet technologies. The discernible reduction in power draw, especially in clustered data center architectures housing numerous nodes, culminates in a lower total cost of operation and environmentally conscious data center facilities.

Applications of InfiniBand

In contemporary contexts, InfiniBand has ushered in transformative paradigms for supercomputers, storage systems, data centers, and clusters. Its high-speed data transfer capabilities render it indispensable across various industries, underpinned by its prowess in facilitating swift access to extensive data repositories and robust performance in data-intensive applications. The ensuing sections delineate specific domains where InfiniBand assumes a pivotal role:

1. InfiniBand in Supercomputing: Serving as the linchpin behind the world's fastest supercomputers, InfiniBand propels unparalleled performance, fostering a remarkable level of parallelism. Its prevalence in diverse scientific fields, as evidenced by over 70% adoption in the top 500 supercomputers globally, attests to its indispensability in high-performance computing systems.

2. InfiniBand in High-Performance Storage Systems: Within high-performance storage systems, InfiniBand stands as an integral component, leveraging its high-speed capabilities, minimal latency, and exceptional bandwidth to expedite data communication between servers and storage systems. This proves particularly advantageous for data-intensive applications requiring swift access to expansive data repositories.

3. InfiniBand in Data Centers and Clusters: In dynamic fiber optic data center and cluster environments, InfiniBand serves as the connective tissue, linking servers and diverse devices, including storage systems. Its high-speed communication capabilities contribute to the seamless operation of clusters as a unified entity, thereby optimizing performance for applications reliant on parallel computing. Furthermore, InfiniBand emerges as a reliable and scalable interconnect for virtualization platforms, enhancing the efficient utilization of server resources.

4. InfiniBand in HPC Workloads: The exceptional bandwidth and low-latency attributes of InfiniBand are instrumental in handling HPC workloads. It facilitates the concurrent processing of substantial data volumes, thereby enhancing the performance of scientific research applications. InfiniBand finds application in demanding workloads such as financial modeling, oil and gas exploration, and other data-intensive tasks.

In summation, the ascendancy of AI large models underscores the critical role played by InfiniBand technology, positioning it as an indispensable enabler across diverse technological domains.

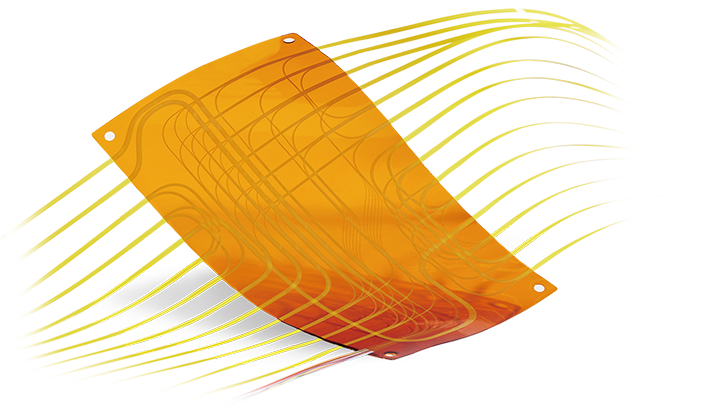

Fiber Optic Flex Circuit (FOFC)

Advanced Simulation & Optimization, High Positioning Accuracy, Flexible Customization, Rigorous Reliability Testing

Fiber Optic Flex Circuit (FOFC)

Advanced Simulation & Optimization, High Positioning Accuracy, Flexible Customization, Rigorous Reliability Testing MDC Solution

US Conec's MDC connector is a Very Small Form Factor (VSFF) duplex optical connector, expertly designed for terminating single-mode and multimode fiber cables with diameters up to 2.0mm.

MDC Solution

US Conec's MDC connector is a Very Small Form Factor (VSFF) duplex optical connector, expertly designed for terminating single-mode and multimode fiber cables with diameters up to 2.0mm. MMC Solution

US Conec's Very Small Form Factor (VSFF) multi-fiber optical connector that redefines high-density connectivity with its cutting-edge TMT ferrule technology and intuitive Direct-Conec™ push-pull boot design.

MMC Solution

US Conec's Very Small Form Factor (VSFF) multi-fiber optical connector that redefines high-density connectivity with its cutting-edge TMT ferrule technology and intuitive Direct-Conec™ push-pull boot design. EN

EN

jp

jp  fr

fr  es

es  it

it  ru

ru  pt

pt  ar

ar  el

el  nl

nl